Google, Microsoft and xAI agree to provide US government with early AI model access

A day after reporting from The New York Times said the Trump administration was considering whether to tighten its oversight of the AI industry, Google, Microsoft and xAI have signed agreements to provide the federal government with early access to their AI systems. According to the The Wall Street Journal, the Commerce Department Center for AI Standards and Innovation (CAISI) will evaluate new models the companies develop.

"Independent, rigorous measurement science is essential to understanding frontier AI and its national security implications," CAISI director Chris Fall told The Journal. "These expanded industry collaborations help us scale our work in the public interest at a critical moment." The deal reportedly calls for Google, Microsoft and xAI to provide their models to CAISI with reduced or even disabled safeguards in order for the organization to probe them for national security-related capabilities and risks.

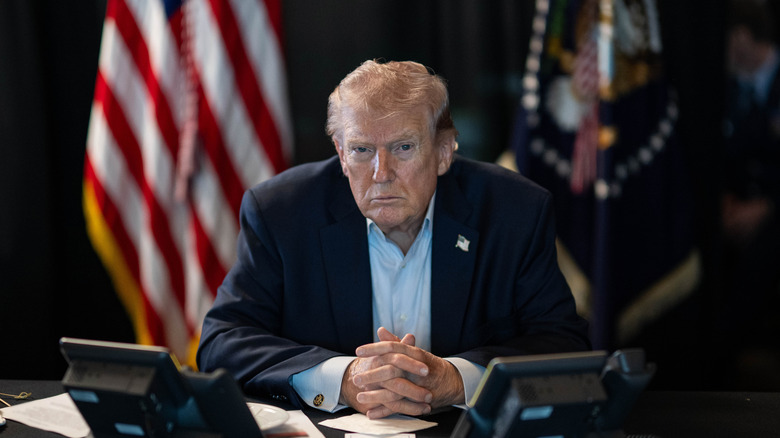

As mentioned, the agreement follows reporting that the Trump administration wanted to introduce new AI regulation. As of May 4, the White House was reportedly considering creating a working group to oversee development of future AI models, with the committee having the power to review new models ahead of their public release. At first glance, this new approach would appear to mark a reversal from laissez-faire regulatory path Trump outlined in his AI Action Plan, but as I argued in my piece on the proposal, the president has, from the start, sought to bend the AI industry to his will.

For instance, you can trace back everything that's happened since the start of the Pentagon's feud with Anthropic, which has seen the defense department seek to label the company a supply chain risk after it insisted on safeguards designed to prevent its tech from being used for mass surveillance and autonomous weapons, to a executive order Trump signed on the day he announced the AI Action Plan. "Preventing Woke AI in the Federal Government" saw the president prohibit federal agencies from procuring AI systems that "manipulate responses in favor of ideological dogmas such as DEI." What the president was doing then was creating an ideological test that companies must pass and Anthropic later failed that test. It should be no surprise then that most companies have chosen to sign deals with the federal government rather than be put through their own tests.